Most online spaces start with good intentions. Then they drift. Without structure, conversations turn into noise, spam takes over, and the people who most need to connect quietly leave. For African tech professionals, founders, and entrepreneurs, this is not just an inconvenience. It is a real barrier to growth. Tiered coordination and hybrid AI-human moderation reduce violations by 40%, which means the difference between a thriving network and a dead forum often comes down to one thing: moderation. This article breaks down why it matters, how it works, and what you can do to build or join spaces that actually deliver results.

Table of Contents

- Why moderation matters in online tech communities

- How moderation works: Emerging best practices

- Hybrid AI-human moderation: Scaling trust and nuance

- Fostering innovation and collaboration through moderation

- Common pitfalls and optimizing for growth

- Take your next step: Join or start a thriving discussion space

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Moderation fosters trust | Enforced guidelines and hybrid moderation models keep online spaces safe and constructive. |

| Hybrid approach scales | Combining AI automation with human oversight balances efficiency and cultural nuance. |

| Innovation needs structure | Clear objectives and evidence-based practices turn moderated spaces into engines of networking and growth. |

| Peer solutions matter | Empowering community members to resolve conflicts reduces workload and strengthens engagement. |

Why moderation matters in online tech communities

Unmoderated forums are not neutral. They actively work against the people who need them most. For African tech professionals navigating trust barriers, limited local investor networks, and fragmented digital infrastructure, a chaotic online space is worse than no space at all.

Here is what structured moderation actually delivers:

- Safety and trust: Clear rules reduce harassment and spam, making it easier for members to speak openly.

- Higher quality networking: Moderated spaces facilitate networking and structured forums for co-founders, funding discussions, and policy conversations.

- Measurable results: Enforcing clear guidelines using tiered moderation and combining AI tools with human oversight creates safer, more effective environments.

- Growth for startups: Structure is not just protection. It is a growth driver. Networking online communities built on clear rules attract serious contributors.

"Moderation is not just policing. It is the foundation for innovative communities."

The AI-human moderation research confirms this. Communities with active moderation see higher engagement, better retention, and more meaningful collaboration. For African founders building across borders, that is not a small thing. It is the whole game. Joining a professional platform built on these principles gives you a real head start.

How moderation works: Emerging best practices

Knowing why moderation matters is one thing. Understanding how it actually works is what lets you build or choose the right space.

Effective moderation follows a clear sequence:

- Set proactive rules before launch. Vague guidelines invite loopholes. Specific, enforceable rules set expectations from day one.

- Detect problems early. Proactive early detection and peer-to-peer resolution can decrease moderator workload by 60% and prevent 60% of conflicts.

- Use tiered responses. Warnings come first, then temporary restrictions, then permanent bans. This keeps the community fair and gives members a chance to correct behavior.

- Enable private de-escalation. Direct messaging between moderators and members resolves most issues before they go public.

- Combine automation with human judgment. AI filters manage spam well, but humans are needed for nuance like sarcasm or cultural context.

The Reddit moderation study shows that peer-driven moderation, where trusted community members share the load, scales far better than top-down enforcement alone. This matters for African tech communities that often grow fast and cross multiple languages and cultures.

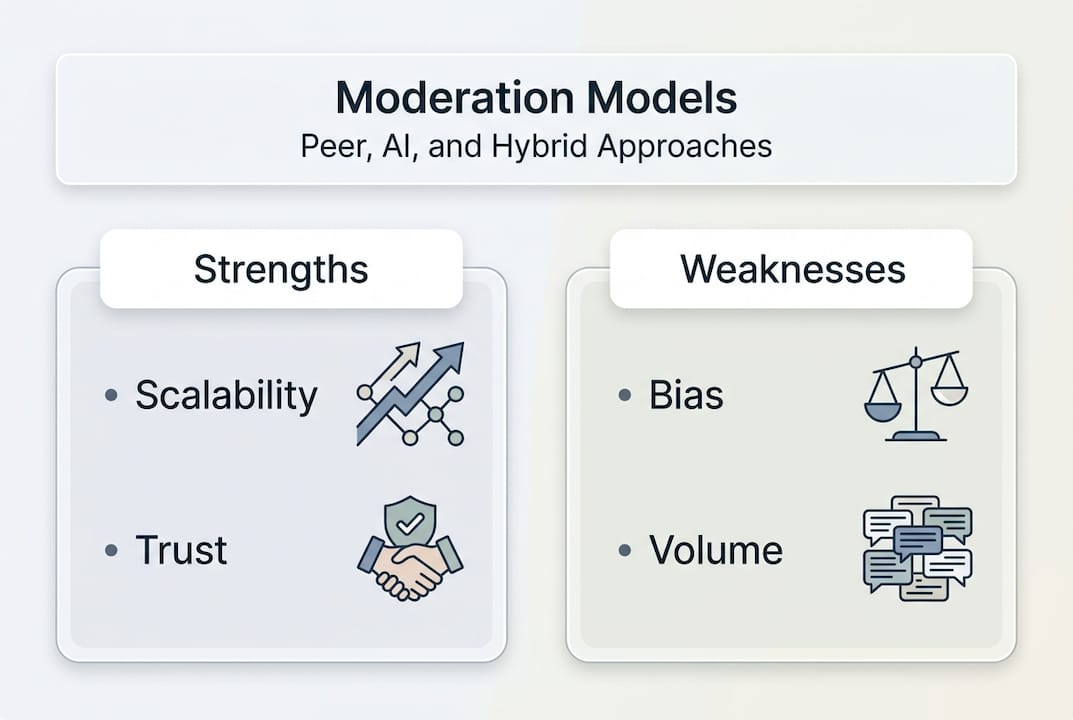

| Moderation method | Strengths | Weaknesses |

|---|---|---|

| Rules-only | Simple to implement | Easily ignored without enforcement |

| Automated only | Fast, scalable | Misses cultural nuance |

| Human only | High nuance | Does not scale well |

| Tiered hybrid | Scalable and nuanced | Requires setup investment |

Pro Tip: Write your community rules as specific behaviors, not values. "No promotional links in the main feed" is enforceable. "Be respectful" is not. Learn more about modern moderation methods and how to launch online discussions the right way.

Hybrid AI-human moderation: Scaling trust and nuance

As communities grow, the moderation challenge shifts. You cannot manually review every post. But you also cannot hand everything to an algorithm and expect it to understand context.

Here is how the three main models compare:

| Model | Speed | Nuance | Scalability | Trust level |

|---|---|---|---|---|

| AI only | Very fast | Low | Very high | Moderate |

| Human only | Slow | Very high | Low | High |

| Hybrid | Fast | High | High | Very high |

Manual moderation struggles with volume, while AI automatically filters spam but lacks nuance. Post-guidance AI increases successful posts by 5.8% and reduces overall workload. That is a meaningful gain for any growing community.

The real advantage of hybrid moderation is trust. Hybrid AI-human moderation enables scale while maintaining the trust essential for effective networking. For African tech communities that span Nigeria, Kenya, Ghana, South Africa, and beyond, cultural nuance is not optional. It is essential.

Key stat: Hybrid moderation models reduce auto-removals by nearly 35%, which directly boosts engagement. Fewer false positives mean fewer frustrated members leaving.

If you are evaluating where to build your presence, look at discussion platform alternatives that already use hybrid moderation. Starting from scratch is hard. Joining a platform that has already solved this problem is smarter.

Fostering innovation and collaboration through moderation

Well-moderated forums do more than keep the peace. They become actual launchpads. Think expert Q&A threads where a Lagos-based developer gets direct feedback from a Nairobi investor. Co-founder matching sections where a technical founder finds a business partner in Accra. Pitch spaces where early-stage startups get structured feedback before approaching VCs.

Peer interaction in moderated settings boosts business plan submission and quality, with more diversity in outcomes. This is from a Pan-African randomized controlled trial. The evidence is clear: structured peer interaction drives real entrepreneurial results.

Here is what makes these forums work for innovation:

- Clear goals: Every section of the forum has a defined purpose.

- Active engagement: Moderators participate, not just police.

- Cross-country exchange: Members from different African markets share local knowledge.

- Government and community support: Forums connected to broader ecosystems have more impact.

Forums as communities of practice drive innovation, but unclear objectives and support gaps undermine impact. Structure is what separates a productive forum from a ghost town.

Pro Tip: Invest as much in community-building activities as in rule enforcement. Host monthly challenges, spotlight member wins, and create dedicated spaces for collaboration. This is how you grow your tech career and enable live tech collaboration that goes beyond surface-level networking.

Common pitfalls and optimizing for growth

Even well-intentioned communities fail. Knowing the most common mistakes helps you avoid them before they cost you members and momentum.

The most frequent pitfalls are:

- Unclear objectives. Unclear community goals and lack of structured activities lead to minimal forum impact. If members do not know what the space is for, they will not use it.

- Insufficient engagement. A forum where moderators only show up to delete posts is not a community. It is a bulletin board.

- Over-reliance on automation. AI catches spam. It does not build culture. Leaning too hard on filters creates a cold, transactional environment.

- No feedback loop. Members who feel unheard leave. Regular check-ins and transparent moderation decisions keep trust intact.

- Reactive-only moderation. Waiting for problems to escalate before acting is expensive. Prevention is always cheaper than repair.

"Sustainable communities prioritize peer interaction and positive culture over constant rule enforcement."

Peer resolution and resilient culture-building are more sustainable than constant rule enforcement. The best communities self-regulate because members feel ownership. That only happens when the moderation model is transparent and fair.

Practical fixes are straightforward. Align your community goals with member needs. Run regular check-ins, even simple monthly polls. Be transparent about why content gets removed. And use discussion platforms for launches that already have these systems built in, so you are not building from zero.

Take your next step: Join or start a thriving discussion space

You now have a clear picture of what makes moderated communities work and what causes them to fail. The next step is practical.

Discors.chat is built for exactly this. It is a real-time discussion platform designed for African tech professionals, founders, developers, and entrepreneurs who want to connect without the noise of traditional social media. You can start a discussion, join existing conversations, follow trending topics in tech and startups, and find collaborators or job opportunities in a moderated, safe environment. Sign up with Google or Apple in seconds. Whether you are launching a product, looking for a co-founder, or just want to engage with people building real things across Africa, Discors gives you the structure and the community to make it count.

Frequently asked questions

How does moderation improve online networking for African tech professionals?

Moderation enables safer networking by enforcing clear rules that reduce spam and harassment, making it easier to form genuine connections and share ideas without fear.

What is the difference between AI and human moderation?

AI handles high-volume tasks and detects spam quickly, while humans catch subtle issues like sarcasm or cultural context. AI moderation needs human oversight for nuance, and the best results come from combining both.

Why do some online communities fail despite moderation?

Forums need clear objectives and structured activities to succeed. Without them, even moderated spaces see low participation and minimal real-world impact.

What are effective strategies to prevent conflicts in moderated spaces?

Early intervention, private messaging for de-escalation, and structured peer-to-peer resolution are the most effective tools. Peer resolution reduces moderator work by 60% and early intervention prevents 60% of conflicts before they escalate.