Online discussions can transform your tech community or destroy it. Without proper moderation, harassment escalates, meaningful conversations disappear, and engaged members leave. African tech founders building communities on platforms like Discors.chat face unique challenges: limited moderation resources, diverse time zones, and rapidly growing user bases. This guide provides actionable strategies to moderate discussions effectively, foster safer spaces, and build thriving communities where founders, developers, and entrepreneurs can collaborate, share ideas, and grow together. You’ll learn how to prepare your community, implement smart moderation tools, execute progressive discipline, and continuously improve your approach.

Table of Contents

Key takeaways

| Point | Details |

|---|---|

| Clear guidelines prevent conflicts | Specific, observable rules replace vague expectations and reduce moderation workload. |

| Automation handles routine tasks | Bots and filters manage up to 80% of common violations, freeing moderators for complex issues. |

| Progressive discipline works better | Warning, timeout, suspension, and ban steps reduce repeat violations more effectively than immediate bans. |

| Data drives improvement | Tracking metrics like retention rates and violation patterns helps refine moderation strategies over time. |

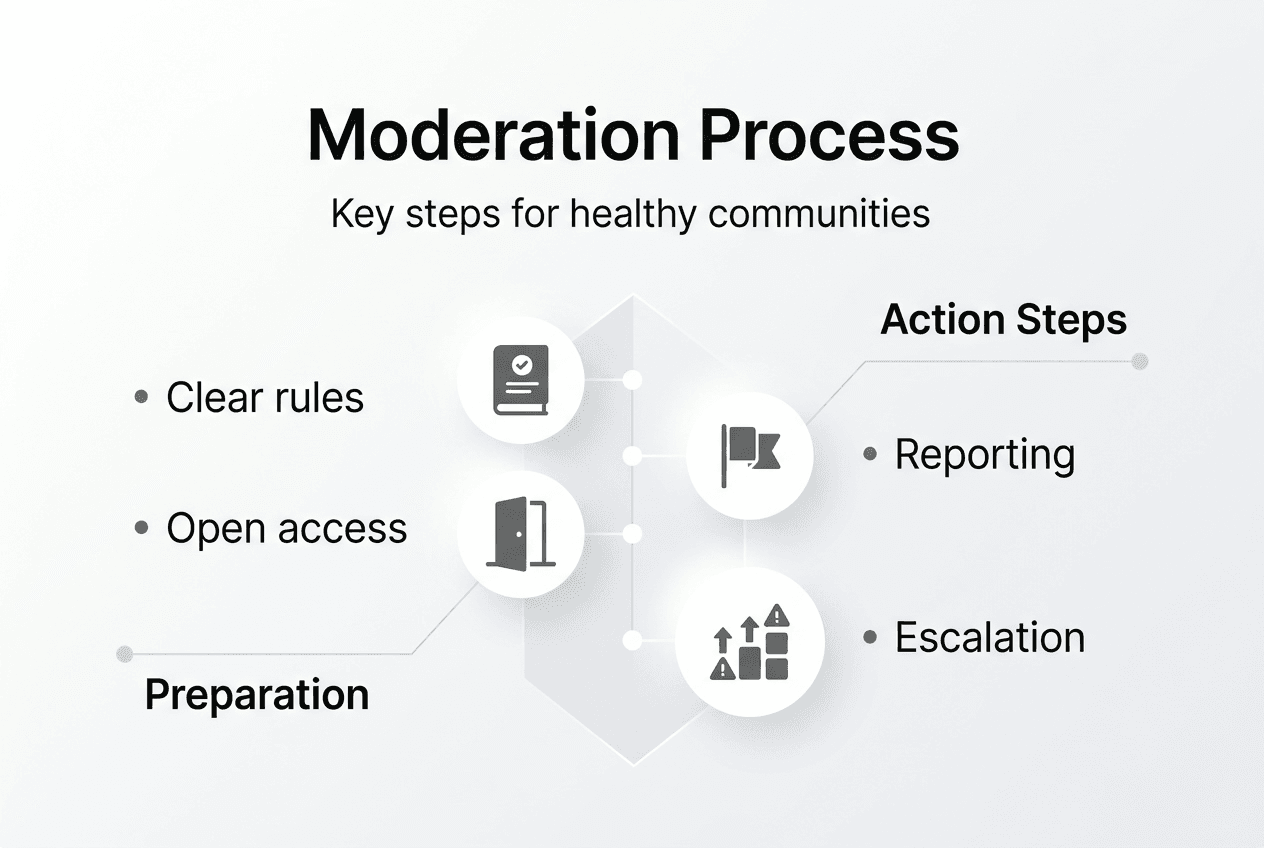

Preparing your community for effective moderation

Successful moderation starts before your first user joins. You need clear rules, a prepared team, and accessible processes that set behavioral expectations from day one.

Start by defining specific, observable rules. Replace vague terms like “be respectful” with concrete examples: “No personal attacks on members’ ideas or backgrounds” or “Share constructive criticism without dismissing others’ contributions.” Transparency in community guidelines sets clear expectations for user behavior and reduces confusion when enforcing rules. When members know exactly what crosses the line, they self-moderate more effectively.

Publish your guidelines where every member can find them easily. Pin them in your main channel, include them in welcome messages, and reference them during onboarding. Clear community guidelines are fundamental to maintaining a healthy chat environment. If you’re launching online discussions for African tech professionals, consider using Discord’s Rules Screening feature or similar tools to ensure new members agree to rules before participating.

Recruit a diverse moderation team that reflects your community’s geographic spread and expertise areas. Building a diverse team with members from different time zones ensures 24/7 coverage and responsiveness. For African communities spanning Lagos to Nairobi to Cape Town, this means recruiting moderators across multiple time zones who understand local contexts and communication styles.

Set up clear reporting and escalation processes so members know how to flag issues and moderators know when to involve senior team members. Create private channels for moderator communication, document decision-making processes, and establish response time expectations. When a harassment report comes in at 2 AM Nairobi time, your Johannesburg moderator should know exactly how to handle it.

Pro Tip: Review and update your moderation guidelines every quarter to reflect your evolving community culture. What worked for 100 members may not work for 1,000, and new issues will emerge as your community grows.

Implementing moderation tools and automation

Technology amplifies your moderation team’s effectiveness, but only when you balance automation with human judgment. The right tools handle repetitive tasks while preserving the nuanced decision making that builds trust.

Start with bots and keyword filters to catch obvious violations automatically. Automated moderation tools handle up to 80% of common tasks, freeing human moderators for complex situations. Configure filters to flag or remove spam, known slurs, and prohibited content types like phishing links or unauthorized promotions. This baseline protection runs 24/7 without human intervention.

Customize AI-based toxicity filters to fit your community’s context and culture. Generic filters often fail because they lack context. A heated but respectful debate about startup funding strategies shouldn’t trigger the same response as personal attacks. Off-the-shelf toxicity filters often fail due to context blindness, so train your tools with examples from your actual community conversations.

Balance automated actions with human moderator oversight for nuanced situations. Automated tools detect harmful behaviors using AI-driven content recognition, but they can’t understand sarcasm, cultural references, or whether a seemingly harsh comment is actually friendly banter between long-time members. Set automation to flag questionable content for human review rather than taking immediate action on edge cases.

| Tool Type | Best For | Limitations |

|---|---|---|

| Keyword filters | Blocking known slurs and spam | Misses context and creative spelling |

| AI toxicity detection | Identifying hostile tone and harassment | Cultural context blindness |

| Raid protection bots | Stopping coordinated attacks | Requires configuration for your server size |

| Audit logging | Tracking moderator actions and patterns | Needs regular human review |

Explore Discord moderation tools that integrate with your platform and match your community size. Popular options include MEE6, Dyno, and Carl-bot for Discord-based communities, while platforms like Discors.chat offer built-in moderation features designed for tech professional communities. Consider automation for engagement alongside moderation to maintain active participation.

Pro Tip: Train your automated tools continuously with human feedback to reduce false positives. When a bot incorrectly flags content, mark it as a learning example so the system improves over time.

Executing progressive moderation and conflict resolution

How you respond to violations shapes your community culture more than your written rules. Progressive discipline and constructive conflict resolution turn potential disasters into learning opportunities.

Outline a step-by-step progressive discipline policy that escalates with repeated violations. Progressive discipline such as warnings and timeouts is more effective than immediate bans for minor infractions. Your policy might look like this:

-

First offense: Private warning explaining the violation and expected behavior

-

Second offense: Temporary timeout (24-48 hours) with clear explanation

-

Third offense: Longer suspension (7-14 days) and final warning

-

Fourth offense: Permanent ban with appeal process

Adjust this framework based on violation severity. Spam might follow this progression, while doxing or credible threats warrant immediate bans. Document every action in your moderation logs so you can track patterns and ensure consistency.

Encourage moderators to de-escalate conflicts respectfully without engaging in power struggles. When two members argue about the best programming language for African startups, your moderator shouldn’t pick sides or flex authority. Instead, they should redirect the conversation toward productive dialogue: “Both Python and JavaScript have merits for different use cases. Let’s focus on specific project requirements rather than absolute judgments.”

Use Audit Log features to maintain accountability and spot concerning trends. Regular log reviews reveal whether certain moderators apply rules inconsistently, whether specific channels generate disproportionate violations, or whether particular times of day see more conflicts. This data informs training needs and policy adjustments.

“Unmanaged conflict destroys communities.” Lena Tran, Community Governance Consultant

Train moderators to apply rules consistently across all members, reducing resentment and confusion. Nothing erodes trust faster than perceived favoritism. If a prominent community member violates rules, they receive the same consequences as a new member. Document your decisions and explain them transparently when appropriate.

When conflicts arise, guide your team through these resolution steps:

-

Separate the immediate issue from personal feelings

-

Listen to all parties involved before making decisions

-

Reference specific rule violations rather than character judgments

-

Explain consequences clearly and offer paths to restoration

-

Follow up privately with involved parties after public resolution

This approach maintains dignity while enforcing boundaries, helping members understand that moderation protects the community rather than punishing individuals. Strong moderation enhances community participation benefits by creating safer spaces for collaboration and idea sharing.

Monitoring, evaluating, and continuously improving moderation

Effective moderation evolves with your community. Regular evaluation and data-driven adjustments keep your approach relevant as your community grows and changes.

Review moderation logs and community reports weekly to identify patterns that inform policy updates. Are certain rule violations increasing? Do specific discussion topics consistently generate conflicts? Are moderator response times meeting your standards? Effective moderation significantly increases community engagement and reduces toxicity, but only when you actively refine your approach based on real outcomes.

Track key metrics that reveal your moderation’s impact on community health. User retention rates show whether members stick around after their first week. Rule violation rates indicate whether your guidelines and enforcement are working. Incident report volumes reveal whether members trust your moderation process enough to flag issues. Response time averages demonstrate your team’s availability and efficiency.

| Metric | What It Reveals | Review Frequency |

|---|---|---|

| User retention rate | Whether moderation creates welcoming environment | Monthly |

| Rule violation rate | Effectiveness of guidelines and enforcement | Weekly |

| Incident report volume | Member trust in moderation process | Weekly |

| Moderator response time | Team availability and efficiency | Daily |

| Appeal success rate | Fairness and accuracy of initial decisions | Monthly |

Update community guidelines and moderator training based on data insights and member feedback. If appeals frequently overturn certain types of decisions, your rules may be unclear or your moderators need additional training. If new violation types emerge, add them to your guidelines and brief your team. Transparency in community guidelines remains key as you evolve your approach.

Implement these best practices for transparency and continuous improvement:

-

Publish anonymized moderation statistics monthly to demonstrate accountability

-

Solicit member feedback quarterly through surveys or discussion threads

-

Host regular moderator training sessions covering new scenarios and policy updates

-

Create a public changelog documenting guideline updates and the reasoning behind them

-

Establish a clear appeal process that gives members recourse for perceived unfair decisions

-

Celebrate moderation wins by highlighting how intervention prevented escalation or resolved conflicts

Consider how different community platform options support your evolving moderation needs. As your community scales from dozens to hundreds or thousands of members, you may need more sophisticated tools, additional moderators, or platform features that weren’t necessary initially.

Schedule quarterly retrospectives where your moderation team discusses what’s working, what’s not, and what changes would improve effectiveness. These sessions build team cohesion and surface insights that individual moderators might not recognize on their own. Your most experienced moderators become trainers for new team members, creating a culture of continuous learning.

Try Discors.chat to build and moderate your community

Moderation challenges shouldn’t stop you from building the thriving tech community your vision deserves. Discors.chat provides a discussion-first platform designed specifically for African founders, developers, and entrepreneurs who want meaningful connections without the chaos of traditional social media.

The platform combines real-time discussion, content discovery, and networking features with built-in moderation tools that support both automation and human oversight. You can set clear community guidelines, implement progressive discipline, and track engagement metrics all in one place. Whether you’re launching a startup community in Lagos, connecting developers across East Africa, or building a network for tech investors, Discors.chat helps you create safer spaces where authentic conversations flourish. Sign up with Google or Apple to start building your moderated community today.

Frequently asked questions

What are the key qualities of an effective discussion moderator?

Patience, consistency, fairness, and strong communication skills form the foundation of effective moderation. Moderators must remain calm during heated exchanges, apply rules uniformly across all members, make decisions based on guidelines rather than personal preferences, and explain their actions clearly. They also need cultural awareness to understand context in diverse communities and the judgment to balance automation with human discretion. Technical proficiency with moderation tools and the ability to de-escalate conflicts without power struggles are equally important.

How can I handle toxic behavior without discouraging engagement?

Use progressive discipline that educates before punishing, giving members opportunities to correct behavior. Apply your rules transparently by explaining why specific actions violated guidelines and what behavior you expect instead. Encourage positive interactions by highlighting constructive contributions and modeling the tone you want to see. When you remove toxic content, do so privately when possible to avoid public shaming that might discourage others from participating. Focus enforcement on protecting community safety rather than controlling all disagreement, allowing spirited debate within respectful boundaries.

What are the best tools to automate moderation on platforms like Discors.chat?

Popular automation tools include MEE6, Dyno, Carl-bot, and Peak Bot for Discord-based communities, each offering keyword filtering, auto-moderation, and logging features. Custom AI filters trained on your community’s specific context handle toxicity detection more accurately than generic solutions. Discors.chat includes built-in moderation automation tools designed for tech professional communities. Balance automation with human moderators who handle context-sensitive situations, cultural nuances, and edge cases that algorithms miss. Start with basic keyword filters and raid protection, then add AI-based toxicity detection as your community grows.

How do I keep my moderation team motivated?

Recognize moderator efforts regularly through private appreciation messages, public acknowledgment of great calls, and periodic team celebrations. Provide constructive feedback that helps moderators improve their skills while affirming what they do well. Ensure your team has clear guidelines that reduce decision anxiety and appropriate autonomy to act without constant approval. Rotate challenging assignments so no single moderator bears the burden of handling the most difficult situations. Create opportunities for moderators to shape policy and contribute to community direction, giving them ownership beyond enforcement. Consider small perks like exclusive roles, early access to features, or modest compensation if your budget allows.